Mathematical Methods for Physics and Engineering

1. Basic definitions:

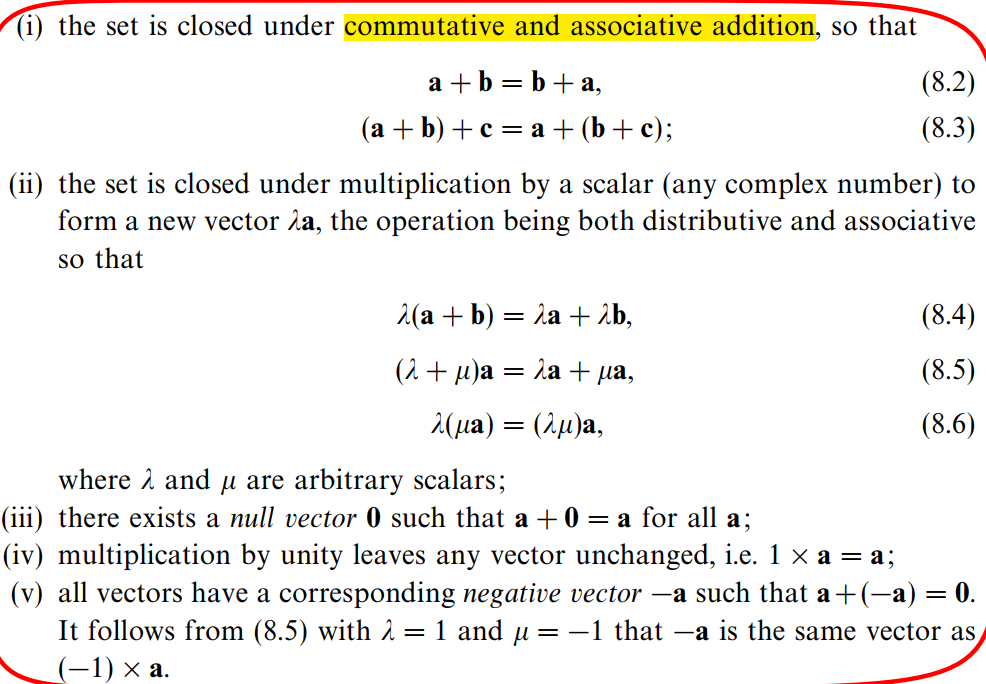

(0) Definition of linear vector space:

a set of \mathbf{a},\mathbf{b},\mathbf{c},\dots forms a linear vector space providing that:

(1) Linearly dependent:

\alpha\mathbf{a}+\beta\mathbf{b}+\cdots+\sigma\mathbf{s}=\mathbf{0}.

(2) A complete set:

\alpha\mathbf{e}_1+\beta\mathbf{e}_2+\cdots+\sigma\mathbf{e}_N+\chi\mathbf{x}=\mathbf{0} or \mathbf{x}=x_{1}\mathbf{e}_{1}+x_{2}\mathbf{e}_{2}+\cdots+x_{N}\mathbf{e}_{N}=\sum_{i=1}^{N}x_{i}\mathbf{e}_{i}where \alpha,\beta,\ldots,\chi are not all equal to 0, and in particular \chi \neq 0.

(3) The inner product:

If \mathbf{e}_{1},\mathbf{e}_{2},\ldots,\mathbf{e}_{N} are orthogonal: then

\begin{array}{rl}&{\langle\mathbf{a}|\mathbf{b}\rangle=\langle\mathbf{b}|\mathbf{a}\rangle^{*}}=\sum_{i=1}^Na_i^*b_i\\ &{\langle\mathbf{a}|\lambda\mathbf{b}+\mu\mathbf{c}\rangle=\lambda\langle\mathbf{a}|\mathbf{b}\rangle+\mu\langle\mathbf{a}|\mathbf{c}\rangle}\end{array}If \mathbf{e}_{1},\mathbf{e}_{2},\ldots,\mathbf{e}_{N} are not orthogonal: then

\begin{array}{rl} \langle \mathbf{a} | \mathbf{b} \rangle &= \left\langle \sum_{i=1}^{N} a_i \mathbf{e}_i \left| \sum_{j=1}^{N} b_j \mathbf{e}_j \right. \right\rangle\\ &= \sum_{i=1}^{N} \sum_{j=1}^{N} a_i^* b_j \langle \mathbf{e}_i | \mathbf{e}_j \rangle\\ &= \sum_{i=1}^{N} \sum_{j=1}^{N} a_i^* G_{ij} b_j \end{array}where G_{ij}=\langle\mathbf{e}_i|\mathbf{e}_j\rangle =G_{ji}^*. And we also know \langle\mathbf{a}|\mathbf{a}\rangle^{*}=\sum_{i=1}^{N}\sum_{j=1}^{N}a_{i}G_{ij}^{*}a_{j}^{*}=\sum_{j=1}^{N}\sum_{i=1}^{N}a_{j}^{*}G_{ji}a_{i}=\langle\mathbf{a}|\mathbf{a}\rangle which means \langle\mathbf{a}|\mathbf{a}\rangle is real.

(4) Linear operator:

A(\lambda x)=\lambda A(x)\\ A(x+y)=A(x)+A(y)

(5) Associative:

\mathrm{A(BC)\equiv(AB)C}. In general, \mathsf{AB}\neq\mathsf{BA}.

(6) Functions:

For example, \exp A=\sum_{n=0}^{\infty}\frac{\mathrm{A}^n}{n!}.

(7) Transposition:

(\mathrm{ABC}\cdots\mathrm{G})^\mathrm{T}=\mathrm{G}^\mathrm{T}\cdots\mathrm{C}^\mathrm{T}\mathrm{B}^\mathrm{T}\mathrm{A}^\mathrm{T}.

(8) Hermitian:

(\mathrm{AB}\cdots\mathrm{G})^{\dagger}=\mathrm{G}^{\dagger}\cdots\mathrm{B}^{\dagger}\mathrm{A}^{\dagger}(\mathbf{A}^{\dagger}=(\mathbf{A}^{*})^{\mathrm{T}}=(\mathbf{A}^{\mathrm{T}})^{*}).

Especially,

\langle\mathbf{a}|\mathbf{b}\rangle =\mathbf{a}^{\dagger}\mathbf{b}=(a_{1}^{*} a_{2}^{*} \cdots a_{N}^{*})\left(\begin{array}{c}{{b_{1}}}\\{{b_{2}}}\\{\vdots}\\{{b_{N}}}\end{array}\right)=\sum_{i=1}^{N}a_{i}^{*}b_{i}(orthogonal:\langle\mathbf{e}_{i}|\mathbf{e}_{j}\rangle= \delta_{ij});

\langle\mathbf{a}|\mathbf{b}\rangle=\sum_{i=1}^{N}\sum_{j=1}^{N}a_{i}^{*}G_{ij}b_{j}=\mathbf{a}^{\dagger}\mathbf{Gb}(not orthogonal:\langle\mathbf{e}_{i}|\mathbf{e}_{j}\rangle=G_{ij}\neq\delta_{ij}).

(9) Trace:

\mathrm{Tr} \mathrm{A}=\sum_{i=1}^{N}A_{ii}

\text{TrAB}=\sum_{i=1}^N\sum_{j=1}^NA_{ij}B_{ji}=\text{TrBA}

\text{Tr ABC = Tr BCA = Tr CAB}

(10) Determinant:

|A_{n\times n}| = \sum_{k=1}^n(-1)^{i+k}A_{ik}|M_{ik}|=\sum_{i=1}^n(-1)^{i+k}A_{ik}|M_{ik}| where |M_{ik}| is the determinant of (n-1)\times (n-1) matrix which has removed ith row and kth column from A_{n\times n}. |M_{ik}| is called mirror, and C_{ik}=(-1)^{i+k}|M_{ik}| is called cofactor. Briefly, |A|=\sum_iA_{ik}C_{ik}=\sum_kA_{ik}C_{ik}.

Properties of determinant:

(i) |A^{\mathrm{T}}|=|A|.

(ii) |A^\dagger|=|A^*|=|A|^*.

(iii) Interchanging two rows or two columns only changes its sign.

(iv) |\lambda A|=\lambda^N|A|.

(v) Identical rows or columns lead to |A|=0.

(vi) Adding a constant multiple of one row or column to another do not change determinant.

(vii) |AB\cdots G|=|A||B|\cdots|G|=|A||G|\cdots|B|=|A\cdots GB|.

(11) Inverse:

(i) Find inverse: (\mathrm{A}^{-1})_{ij}=\frac{(\mathrm{C}^{\mathrm{T}})_{ij}}{|\mathrm{A}|}=\frac{\mathrm{C}_{ji}}{|\mathrm{A}|} or \mathrm{A}^{-1}=\frac{\mathrm{C}^{\mathrm{T}}}{|\mathrm{A}|}.

(ii) Inverse and singular: |A|\neq0 \Leftrightarrow A^{-1}\ exists.

(iii) |A^{-1}|=\frac1{|A|}, provided that |A|\neq0.

(iii) Properties:

\begin{aligned} (\mathrm{A}^{\mathrm{T}})^{-1}=(\mathrm{A}^{-1})^{\mathrm{T}}, (\mathrm{A}^{\dagger})^{-1}=(\mathrm{A}^{-1})^{\dagger}, (\mathrm{AB}\cdots\mathrm{G})^{-1}=\mathrm{G}^{-1}\cdots\mathrm{B}^{-1}\mathrm{A}^{-1} \end{aligned}.

(iv) Eigenvalues: \mathrm{A}^{-1}\mathrm{x}^{i}=\frac{1}{\lambda_{i}}\mathrm{x}^{i}(proving by multiplying A^{-1}).

(12) Rank:

(i) Definition 1:

\mathbf{A}=\left(\begin{array}{cccc}\uparrow&\uparrow&&\uparrow\\\mathbf{v}_1&\mathbf{v}_2&\ldots&\mathbf{v}_N\\\downarrow&\downarrow&&\downarrow\end{array}\right) or \left.\mathbf{A}=\left(\begin{array}{ccc}\leftarrow&\mathbf{w}_1&\to\\\leftarrow&\mathbf{w}_2&\to\\&\vdots&\\\leftarrow&\mathbf{w}_M&\to\end{array}\right.\right)

The rank is the number of linearly independent vectors in the set \mathbf{v}_{1},\mathbf{v}_{2},\ldots,\mathbf{v}_{N} or \mathbf{w}_{1},\mathbf{w}_{2},\ldots,\mathbf{w}_{N}.

(ii) Definition 2:

The rank of matrix A_{M\times N} is the size of the largest square submatrix of A_{M\times N} whose determinant is non-zero.

(13) Eigenvalues:

(i) Mutually orthogonal:

If all N eigenvalues of a normal matrix A are distinct then all N eigenvectors of A are mutually orthogonal:(\lambda_{i}-\lambda_{j})(\mathbf{x}^{i})^{\dagger}\mathbf{x}^{j}=0.

(ii) Degenerate eigenvectors:

Any linear combination of these vectors is also an eigenvector: Az\equiv A\sum_{i=1}^kc_ix^i=\sum_{i=1}^kc_i\lambda_1x^i=\lambda_1z.

(iii) Simultaneous eigenvectors:

[A,B]=0\Leftrightarrow A,B\ have\ a\ common\ set\ of\ eigenvectors (provident see page 278).

Either A or B has degenerate eigenvectors, it doesn't matter, we can construct the total set of eigenvectors of another one by Gram-Schmidt orthogonalization.

(iv) Check eigenvalues:

It should satisfy \sum_{i=1}^N\lambda_i=\text{Tr A}.

(14) Quadratic forms:

(i) Definition: Q(\mathbf{x})=\langle\mathbf{x}|\mathcal{A}\mathbf{x}\rangle or Q(\mathrm{x})=\mathrm{x}^\mathrm{T}\mathrm{A}\mathrm{x}(Q is real).

Q>0 for all x: A is called positive definite; Q\geq0 for all x: A is called positive semi-definite.

Example:

prove that any quadratic forms can be represented by symmetric matrix A that Q(\mathrm{x})=\mathrm{x}^\mathrm{T}\mathrm{A}\mathrm{x} (hint: take M apart into A(symmetric) and B(antisymmetric)).

(ii) Extremizing the quadratic forms:

Extremizing\ Q=\mathrm{x}^{\mathrm{T}}\mathrm{Ax} \ (subject\ to\ \mathbf{x}^{\mathrm{T}}\mathbf{x}=1) \Leftrightarrow Extremizing\ \lambda(\mathrm{x})=\frac{\mathrm{x}^\mathrm{T}\mathrm{A}\mathrm{x}}{\mathrm{x}^\mathrm{T}\mathrm{x}}. And the eigenvectors of A lie along those directions in space for which the quadratic form Q(\mathrm{x})=\mathrm{x}^\mathrm{T}\mathrm{A}\mathrm{x} has stationary values.

Example:

Prove that if λ(x) is stationary then x is an eigenvector of A and λ(x) is equal to the eigenvalue (Solution1hint: \mathrm{x^{T}A\Delta x=(\Delta x^{T})Ax} and \mathrm{x^{T}\Delta x=(\Delta x^{T})x}; Solution2hint: \Delta[\mathrm{x^TAx}-\lambda(\mathrm{x^Tx}-1)]=0).

(iii) Quadratic surfaces:

Properties:

a. Stationary values of its radius are in those directions that are along the eigenvectors of A (especially, in three dimensions its principal axes, which has principal radii \lambda_i^{-1}, are along its three mutually orthogonal eigenvectors).

b. If its one eigenvalue corresponding to two principal axes is degenerate then it has rotational symmetry about its third principal axis (the two degenerate eigenvectors are not uniquely defined).

(15) Hermitian forms:

(i) Definition: H(\mathbf{x})\equiv\mathbf{x}^\dagger\mathbf{A}\mathbf{x} (H is real).

Example:

Prove that H is real (hint: H^*=(H^{\mathrm{T}})^*).

(16) The range and null space:

(i) Definition:

\mathcal{A}_{M\times N}\mathbf{x}=\mathbf{b}: this will map vector \mathbf{x} in N-dimensional space \mathbf{V} into a M-dimensional subspace of \mathbf{W} (this subspace is the range of \mathcal{A}; its dimension is called the rank of \mathcal{A}).

Example:

Prove that if \mathcal{A} is singular then it must have some subspaces of \mathbf{V} which is mapped into a \mathbf{0} subspace of \mathbf{W}.

(hint1: \mathcal{A} must be singular if M\neq N;

hint2: rank(A_{M\times N}) < min(M,N);

hint3: \mathbf{A}\mathbf{x}=\left.\left(\begin{array}{ccc}\leftarrow&\mathbf{A}_1&\to\\\leftarrow&\mathbf{A}_2&\to\\&\vdots&\end{array}\right.\right)x=\left.\left(\begin{array}{ccc}&\mathbf{A}_1\mathbf{x}&\\&\mathbf{A}_2\mathbf{x}&\\&\vdots&\end{array}\right.\right)=\mathbf{0}).

In the above case, such space that \mathbf{x} is lying in is called a null space; its dimension is called the nullity of \mathcal{A}.

Example:

Prove that \mathrm{rank~A+nullity~A=N}.

(hint1: \mathbf{A}\mathbf{x}=\mathbf{b}\\ \Rightarrow x_{1}\mathbf{v}_{1}+x_{2}\mathbf{v}_{2}+\cdots+x_{N}\mathbf{v}_{N}=\mathbf{b}\\ \Rightarrow the\ range\ of\ \mathbf{A} = the\ span\ of\ \mathbf{v} = rank(\mathbf{A});

hint2: A\mathbf{y}=\mathbf{0}\\ \Rightarrow y_1\mathbf{v}_1+y_2\mathbf{v}_2+\cdots+y_N\mathbf{v}_N=\mathbf{0}\\ \Rightarrow the\ nullity\ of\ \mathbf{A} = the\ null\ space\ span\ of\ \mathbf{v} = N-rank(\mathbf{A}) )

(ii) No solution:

\mathbf{A}=\left(\begin{array}{cccc}\uparrow&\uparrow&&\uparrow\\\mathbf{v}_1&\mathbf{v}_2&\ldots&\mathbf{v}_N\\\downarrow&\downarrow&&\downarrow\end{array}\right), \mathbf{A}x=\mathbf{b}, \mathbf{M}=\left(\begin{array}{cccc}\uparrow&\uparrow&&\uparrow\\\mathbf{v}_1&\mathbf{v}_2&\ldots&\mathbf b\\\downarrow&\downarrow&&\downarrow\end{array}\right).

If Rank(\mathbf{A})\neq Rank(\mathbf{M}), then there's no solution.

a. Unique solution: Rank(\mathbf{A})= Rank(\mathbf{M})=N.

b. infinitely many solutions: Rank(\mathbf{A}) = Rank(\mathbf{M})=r < N, and its dimension of solutions is N-r.

(17) Finding solutions:

(i) Direct inversion:

If \mathrm{A}\mathrm{x}=\mathrm{b},|\mathrm{A}|\neq0, then \mathrm{x}=\mathrm{A}^{-1}\mathrm{b}.

(ii) LU decomposition:

(iii) Cholesky decomposition:

\mathrm{A}=\mathrm{LL}^\dagger, where \mathrm{A} is positive semi-definite(\mathrm{x^{\dagger}Ax=x^{\dagger}LL^{\dagger}x=(L^{\dagger}x)^{\dagger}(L^{\dagger}x)}\geq0) and symmetric. If \mathrm{A} is not singular then it must be positive definite. Note that here diagonal elements of L do not all equate unit 1 like LU decomposition does.

(iv) Cramer's rule:

For a 3-dimensional homogenous linear equation:

x_1=\frac{\Delta_1}{|\mathsf{A}|},\quad x_2=\frac{\Delta_2}{|\mathsf{A}|},\quad x_3=\frac{\Delta_3}{|\mathsf{A}|},

where \Delta_1=\begin{vmatrix}b_1&A_{12}&A_{13}\\b_2&A_{22}&A_{23}\\b_3&A_{32}&A_{33}\end{vmatrix},\quad\Delta_2=\begin{vmatrix}A_{11}&b_1&A_{13}\\A_{21}&b_2&A_{23}\\A_{31}&b_3&A_{33}\end{vmatrix},\quad\Delta_3=\begin{vmatrix}A_{11}&A_{12}&b_1\\A_{21}&A_{22}&b_2\\A_{31}&A_{32}&b_3\end{vmatrix}. Larger dimension follows.

Implement:

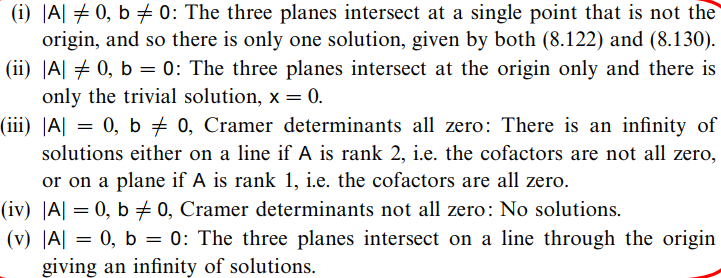

If we see this group of equations as three planes, then the distance from a plane to the original is: d_{i}=\frac{b_{i}}{\left(A_{i1}^{2}+A_{i2}^{2}+A_{i3}^{2}\right)^{1/2}}, where\ i=1,2,3. Reconsider it through geometrical methods, we obtained a summarized result (from MMPE P301):

(v) Singular value decomposition(SVD):

Definition (from MMPE P302): \mathrm{A}=\mathrm{USV}^\dagger=\sum_{i=1}^{r}s_{i}\mathrm{u}^{i}(\mathrm{v}^{i})^{\dagger}\Rightarrow \mathbf{\hat{x}}=\mathbf{V}\mathbf{\overline{S}}\mathbf{U}^\dagger\mathbf{b}.

(i) The square matrix \mathrm{U,V} have dimensions \mathrm{M \times M,N \times N} and are unitary.

(ii) The matrix \mathrm{S} has dimensions \mathrm{M \times N} (the same as \mathrm{A}) and is diagonal in the sense that \mathrm{S_{ij}} = \mathrm{0},for\ i\neq j. Denote its diagonal elements (singular values) by s_i,for\ i=1,2,\dots,p,where\ p=min(M,N).

(iii) \mathbf{\overline{S}} is defined as the value-inversed transpose of \mathbf{S}(s_i\rightarrow \frac{1}{s_i}).

Properties:

(i) \mathrm{S} \mathrm{S}^\dagger,\mathrm{S}^\dagger \mathrm{S} are \mathrm{M \times M,N \times N} diagonal matrices with diagonal elements s_i^2,for\ i=1,2,...,p,where\ p=min(M,N). Note that all other elements (i>p) are 0.

(ii) V^{-1}A^{\dagger}AV and U^{-1}AA^{\dagger}U are diagonal (because \mathrm{A}^{\dagger}\mathrm{A}=\mathrm{VS}^{\dagger}\mathrm{SV}^{\dagger},\mathrm{AA^\dagger=USS^\dagger U^\dagger}).

(iii) Columns of V are normalized eigenvectors (v^i,i=1,2,...,N) of \mathrm{A}^{\dagger}\mathrm{A}(Hermitian); Columns of U are normalized eigenvectors (u^i,i=1,2,...,M) of \mathrm{A}\mathrm{A}^{\dagger}(Hermitian). And s_i^2 are the eigenvalues of the smaller of \mathrm{A}^{\dagger}\mathrm{A} and \mathrm{A}\mathrm{A}^{\dagger}, the larger one has eigenvalues s_i^2, \mathrm{0}(all other). This means you could find eigenvalues quickly providing that you have known eigenvalues of either \mathrm{A}^{\dagger}\mathrm{A} or \mathrm{A}\mathrm{A}^{\dagger}.

(iv) s_i is better arranged as real and non-negative (because of Hermitian). To make this decomposition unique: s_{1}\geq s_{2}\geq\cdots\geq s_{p}.

(v) \mathrm{A}=\sum_{i=1}^{r}s_{i}\mathrm{u}^{i}(\mathrm{v}^{i})^{\dagger}, then \mathrm{u}^i vectors (forms an orthonormal basis) span the range of \mathrm{A}(rank(A)=r); especially,\mathrm{Av}^i=0\ for\ i=r+1,r+2,..., r may be less than p.

(vi) We sometimes will need to use Gram-Schmidt orthogonalization to find eigenvectors of eigenvalue \mathrm{0}(see example on P305).

(vii) When \mathrm{A} is singular its solution \mathrm{x=V\overline{S}U^\dagger b} may become something like this \mathbf{x}=\frac{1}{8}(1\quad1\quad1\quad1)^{\mathrm{T}}+\alpha(1\quad-1\quad1\quad-1)^{\mathrm{T}} which contains uncertain parameter \alpha (P307).

Example1:

Prove that \mathrm{Av}^{i}=s_{i}\mathrm{u}^{i},\mathrm{A}^{\dagger}\mathrm{u}^{i}=s_{i}\mathrm{v}^{i},for\ i=1,2,...,p,where\ p=min(M,N); \mathrm{Av}^{i}=0\ or\ \mathrm{A}^{\dagger}\mathrm{u}^{i}=0,for\ i=p+1,p+2,...,where\ p=min(M,N)(hint: see what \mathrm{AV},\mathrm{A^{\dagger}U} equate).

★Furthermore, we could find \mathbf{v}^i=\frac{1}{s_i}\mathbf{A}^\dagger\mathbf{u}^iand \mathbf{u}^i=\frac{1}{s_i}\mathbf{A}\mathbf{v}^i.

Example2:

Prove that \mathbf{\hat{x}}=\mathbf{V}\mathbf{\overline{S}}\mathbf{U}^\dagger\mathbf{b} is indeed the solution of \mathbf{A\hat{x}}=\mathbf{b}.

(hint: by setting \mathrm{A\hat{x}-b=US\overline{S}U^\dagger b-b=U(S\overline{S}-I)U^\dagger b} whose RHS equates 0 when b lies in the range of A)

Example3:

Prove that even when b does not lie in the range of A,\mathbf{\hat{x}}=\mathbf{V}\mathbf{\overline{S}}\mathbf{U}^\dagger\mathbf{b} minimizes the residual \epsilon=|\mathrm{Ax-b}|:

Assume that there is an alternative \mathrm{\hat{x}_{new}} \rightarrow \mathrm{\hat{x}} +\mathrm{\hat{x}'} which has a smaller residual \mathrm{|A\mathrm{\hat{x}_{new}}-b|}, then it becomes:

, where \mathrm{A\hat{x'}=b'}(Be aware of that here \mathsf{U}^\dagger\mathsf{b} is no longer a vector that (\mathsf{U}^\dagger\mathsf{b})_j\neq0,j\leq r;(\mathsf{U}^\dagger\mathsf{b})_j=0,j>r, instead is a vector (\mathsf{U}^\dagger\mathsf{b})_j\neq0,j\leq r;(\mathsf{U}^\dagger\mathsf{b})_j \ is\ Uncertain,j>r, because \mathsf{\hat{x}} is no longer its root then \mathsf{b} is no longer lying in the range of \mathsf{A}). Thus, it minimizes the residual only when b'=0.

2. Basic conclusions:

(1) Schwarz’s inequality:

|\langle\mathbf{a}|\mathbf{b}\rangle|\leq\|\mathbf{a}\|\|\mathbf{b}\| (prove by set \|\mathbf{a}+\lambda\mathbf{b}\|^{2}=\langle\mathbf{a}+\lambda\mathbf{b}|\mathbf{a}+\lambda\mathbf{b}\rangle and \langle\mathbf{a}|\mathbf{b}\rangle\mathrm{~=~}|\langle\mathbf{a}|\mathbf{b}\rangle|e^{i\alpha}).

(2) Triangle inequality:

\|\mathbf{a}+\mathbf{b}\|\leq\|\mathbf{a}\|+\|\mathbf{b}\|(\langle\mathbf{a}|\mathbf{b}\rangle+\langle\mathbf{b}|\mathbf{a}\rangle=2 \mathrm{Re} \langle\mathbf{a}|\mathbf{b}\rangle ).

(3) Bessel's inequality:

\|\mathbf{a}\|^2\geq\sum_i|\langle\mathbf{\hat{e}}_i|\mathbf{a}\rangle|^2 or \langle\mathbf{a}|\mathbf{a}\rangle\geq\sum_i|a_i|^2(prove by set \left\|\mathbf{a}-\sum_i\langle\mathbf{\hat{e}}_i|\mathbf{a}\rangle\mathbf{\hat{e}}_i\right\|^2)

(4) Parallelogram equality:

\|\mathbf{a}+\mathbf{b}\|^2+\|\mathbf{a}-\mathbf{b}\|^2=2\left(\|\mathbf{a}\|^2+\|\mathbf{b}\|^2\right)

3. Basic algebra:

(1) (A+B)x = Ax+Bx:

\begin{aligned}\sum_{j}(\mathsf{A}+\mathsf{B})_{ij}x_{j}&=\sum_{j}A_{ij}x_{j}+\sum_{j}B_{ij}x_{j} \end{aligned}.

(2) (\lambda A)x=\lambda(Ax):

\begin{aligned}\sum_{j}(\lambda\mathsf{A})_{ij}x_{j}&=\lambda\sum_{j}A_{ij}x_{j} \end{aligned}.

(3) (AB)x=A(Bx)=ABx:

\begin{aligned}\sum_{j} \sum_{j}(\mathsf{AB})_{ij}x_{j}&=\sum_{k}A_{ik}(\mathsf{Bx})_{k}=\sum_{j}\sum_{k}A_{ik}B_{kj}x_{j} \end{aligned}.

(4) Matrix multiplies vector:

y_i=\sum_{j=1}^NA_{ij}x_j.

(5) Matrix multiplies matrix:

P_{ij}=\sum_{k=1}^NA_{ik}B_{kj}.

4. Special matrices:

(1) Diagonal matrices:

\mathrm{A}=\mathrm{diag}\left(A_{11},A_{22},\ldots,A_{NN}\right), then \mathrm{A}^{-1}=\mathrm{diag}\left(\frac{1}{A_{11}},\frac{1}{A_{22}},\ldots,\frac{1}{A_{NN}}\right).

(2) Lower and upper triangular matrices:

|\mathsf{A}|=A_{11}A_{22}\cdots A_{NN}.

(3) Symmetric and antisymmetric matrices:

Definition: \mathrm{A}^{\mathrm{T}}=\pm\mathrm{A}.

Any matrix can be rewritten as the sum of a symmetric and an antisymmetric matrix:

\mathrm{A=\frac{1}{2}(A+A^T)+\frac{1}{2}(A-A^T)=B+C}.

(4) Orthogonal matrices:

Definition: \mathbb{A}^{\mathrm{T}}=\mathbb{A}^{-1}.

(i) If \mathbf{y}=\mathcal{A} \mathbf{x}, then \mathrm{y^Ty=x^TA^TAx=x^Tx} which means an orthogonal operator does not change the norms of real vectors.

(ii) Be ware of that two matrices, \mathrm{A}^{\dagger}\mathrm{A}=\mathrm{A}\mathrm{A}^{\dagger}=0, are mutually orthogonal.

(5) Hermitian and anti-hermitian metrices:

Definition: \mathrm{A}^{\dagger}=\pm\mathrm{A}.

Any matrix can be rewritten as the sum of a Hermitian and an anti-hermitian matrix:

\mathsf{A}=\frac12(\mathsf{A}+\mathsf{A}^{\dagger})+\frac12(\mathsf{A}-\mathsf{A}^{\dagger})=\mathsf{B}+\mathsf{C}.

Example:

Prove that the eigenvalues of an Hermitian matrix are real (solution's on page 276).

Example:

Prove that the eigenvectors corresponding to different eigenvalues of an Hermitian matrix are orthogonal (solution's on page 277).

(6) Unitary matrices:

Definition: \mathbb{A}^{\mathrm{\dagger}}=\mathbb{A}^{-1} .

(i) If \mathbf{y}=\mathcal{A} \mathbf{x}, then \mathrm{y^{\dagger}y=x^{\dagger}A^{\dagger}Ax=x^{\dagger}x} which means an unitary operator does not change the norms of complex vectors.

(ii) Its inverse is also an unitary matrix.

(iii) It has unit modulus.

(iv) Its eigenvalues\lambda\lambda^{*}=|\lambda|^{2}=1.

(7) Normal matrices:

Definition: \mathsf{AA}^{\dagger}=\mathsf{A}^{\dagger}\mathsf{A}.

Hermitian matrices, unitary matrices, symmetric matrices(real), and orthogonal matrices(real) are normal matrices.

If \mathrm{A} is normal, so is \mathrm{A}^{-1}; if \mathrm{A}x^i=\lambda_{i} x^{i}, then \mathrm{A}^{\dagger}x^i=\lambda_{i}^* x^{i}.

Example:

If \mathrm{A}x=\lambda x, find eigenvalue of \mathrm{A}^{\dagger}(solution's on page 274: set B=A-\lambda I).

Example:

Prove if \lambda_i\neq\lambda_j the eigenvectors x_i and x_j must be orthogonal (solution's on page 275: ).

5. Special methods:

(1) Gram-Schmidt orthogonalization:

By this orthogonalization, any arbitrary vector y can be expressed as a linear combination of the eigenvectors x^i:y=\sum_{i=1}^Na_ix^i where a_{i}=(x^{i})^{\dagger}y. By normalizing it we can make it orthonormal.

(2) Change of basis and similarity transformation:

(i) Old basis to new basis: \mathbf{e}_j^{\prime}=\sum_{i=1}^NS_{ij}\mathbf{e}_i.

(ii) New vector to old vector: x_i=\sum_{j=1}^NS_{ij}x_j^{\prime} or \mathrm{x=Sx^{\prime}}.

(iii) Old vector to new vector: \mathrm{x^{\prime}=S^{-1}x}.

(iv) Old operator to new operator: \mathrm{A^{\prime}=S^{-1}AS}.

(v) Properties of similarity transformation:

a. if A=I, then \mathrm{A^{\prime}=S^{-1}IS=S^{-1}S=I}

b. Determination unchanged:

|A^{\prime}|=|S^{-1}AS|=|S^{-1}||A||S|=|A||S^{-1}||S|=|A||S^{-1}S|=|A|

c. Eigenvalues unchanged:

|\mathrm{A}^{\prime}-\lambda \mathrm{I}| =|\mathrm{S}^{-1}\mathrm{AS}-\lambda\mathrm{I}|=|\mathrm{S}^{-1}(\mathrm{A}-\lambda\mathrm{I})\mathrm{S}| =|\mathrm{S}^{-1}||\mathrm{S}||\mathrm{A}-\lambda\mathrm{I}|=|\mathrm{A}-\lambda\mathrm{I}|

d. Trace unchanged:

\begin{aligned} \mathrm{TrA'} & =\sum_{i}A_{ii}^{\prime}=\sum_{i}\sum_{j}\sum_{k}(\mathsf{S}^{-1})_{ij}A_{jk}S_{ki} \\ & =\sum_{i}\sum_{j}\sum_{k}S_{ki}(\mathsf{S}^{-1})_{ij}A_{jk}=\sum_{j}\sum_{k}\delta_{kj}A_{jk}=\sum_{j}A_{jj} \\ & =\mathrm{Tr}\mathrm{A} \end{aligned}

(vi) Properties of unitary transformation(S^{\dagger}S=I):

a. If original basis \mathbf{e}_i is orthonormal then \mathbf{e}_i^{'} is also orthonormal:

\begin{aligned} \langle\mathbf{e}_i^{\prime}|\mathbf{e}_j^{\prime}\rangle & =\langle\sum_{k}S_{ki}\mathbf{e}_{k}|\sum_{r}S_{rj}\mathbf{e}_{r}\rangle =\sum_{k}S_{ki}^{*}\sum_{r}S_{rj}\langle\mathbf{e}_{k}|\mathbf{e}_{r}\rangle \\ & =\sum_{k}S_{ki}^{*}\sum_{r}S_{rj}\delta_{kr}=\sum_{k}S_{ki}^{*}S_{kj}=(\mathbf{S}^{\dagger}\mathbf{S})_{ij}=\delta_{ij} \end{aligned}

b. If A is Hermitian or anti-Hermitian then A' is also the same:

(\mathbf{A}^{\prime})^{\dagger}=(\mathbf{S}^{\dagger}\mathbf{A}\mathbf{S})^{\dagger}=\mathbf{S}^{\dagger}\mathbf{A}^{\dagger}\mathbf{S}=\pm\mathbf{S}^{\dagger}\mathbf{A}\mathbf{S}=\pm\mathbf{A}^{\prime}.

c. If A is unitary then A' is also unitary:

\begin{aligned} (\mathsf{A}^{\prime})^{\dagger}\mathsf{A}^{\prime} =(\mathsf{S}^\dagger\mathsf{AS})^\dagger(\mathsf{S}^\dagger\mathsf{AS})=\mathsf{S}^\dagger\mathsf{A}^\dagger\mathsf{SS}^\dagger\mathsf{AS}=\mathsf{S}^\dagger\mathsf{A}^\dagger\mathsf{AS} =\mathsf{S}^\dagger\mathsf{IS}=\mathsf{I} \end{aligned}.

(3) Diagonalization:

(i) The diagonalization matrix S is constructed by eigenvectors of A:

\left.\left.\left.\mathbf{S}=\left( \begin{array} {cccc}\uparrow & \uparrow & & \uparrow \\ \mathbf{x}^1 & \mathbf{x}^2 & \cdots & \mathbf{x}^N \\ \downarrow & \downarrow & & \downarrow \end{array}\right.\right.\right.\right), where x^j are all linearly independent and form a basis for N-dimensional vector space. (But if A has degenerate eigenvalues then it may not have N linearly independent eigenvectors which means it cannot be diagonalized.)

this could be proved by:

(ii) Normal matrices will form N linearly independent eigenvectors \Rightarrow Any normal matrix can be diagonalized.

(iii) If all eigenvectors of A are normalized(orthonormal set) then we can easily find the inverse of S: \mathsf{S}^{-1}=\mathsf{S}^{\dagger}. Because:

(\mathbf{S}^{\dagger}\mathbf{S})_{ij}=\sum_{k}(\mathbf{S}^{\dagger})_{ik}(\mathbf{S})_{kj}=\sum_{k}\mathbf{S}_{ki}^{*}\mathbf{S}_{kj}=\sum_{k}(\mathbf{x}^{i})_{k}^{*}(\mathbf{x}^{j})_{k}=(\mathbf{x}^{i})^{\dagger}\mathbf{x}^{j}=\delta_{ij}

(iv) Trace formula: |\exp\mathrm{~A}|=\exp(\mathrm{Tr~A})(see page 287).

Comment Section